1Learning Outcomes¶

Using address terminology, describe how to find a block in a set-associative cache: tag, index, and offset.

For a given pattern of memory accesses to a set-associative cache, identify if each memory access is a cache hit or cache miss.

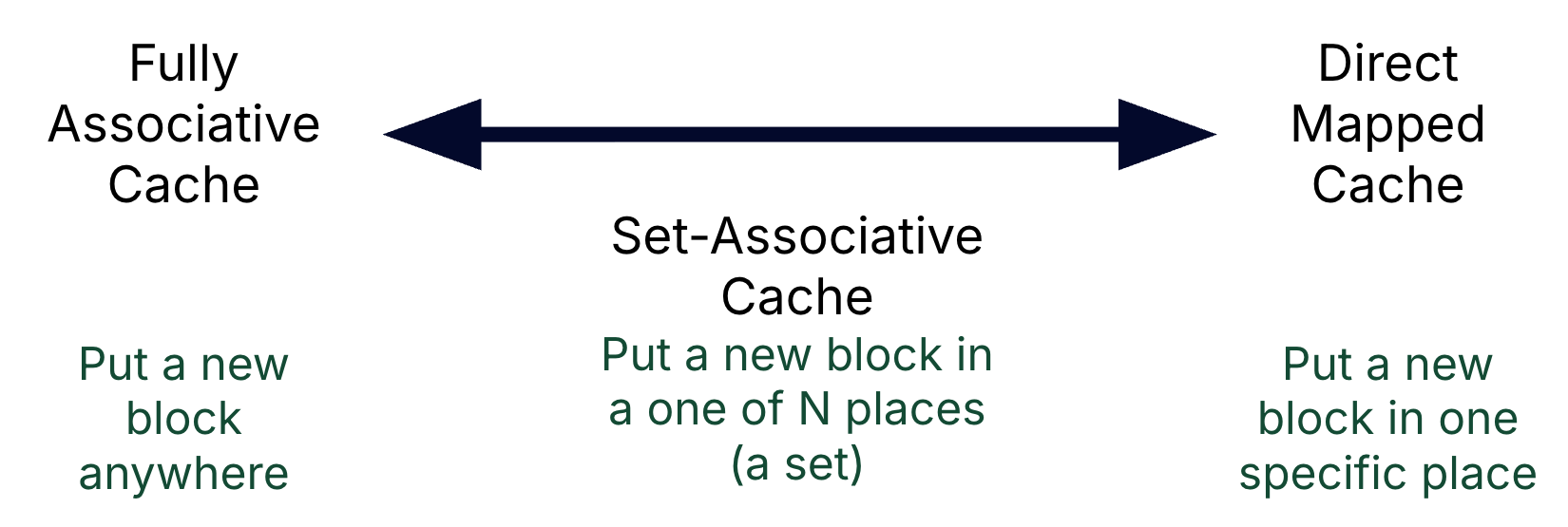

Contrast set-associative caches with direct-mapped and fully associative caches.

🎥 Lecture Video: Set-Associative Caches

🎥 Lecture Video: Set-Associative, Line Replacement

7:00 onwards

2Placement Policy¶

A set is a group of blocks in the cache. A block is first mapped onto a set, and then the block can be placed anywhere within that set.

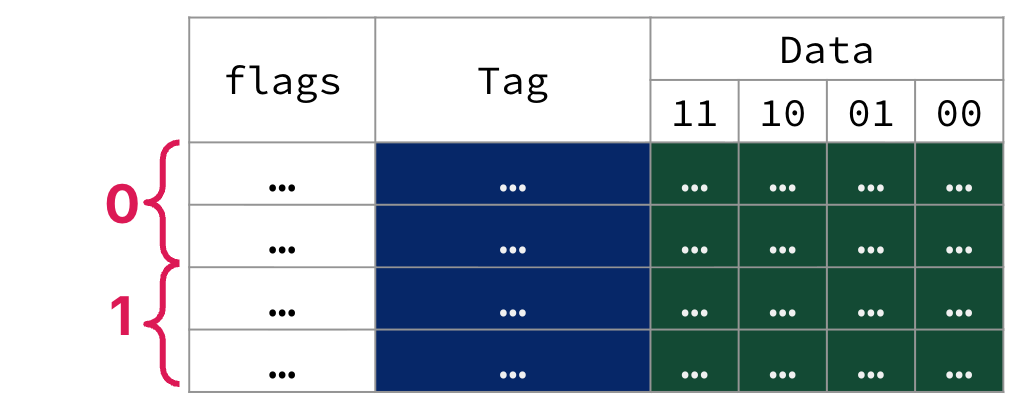

A cache is N-Way Set Associative if sets of N cache blocks are assigned a unique index. The associativity of a cache is therefore the number of slots (i.e., ways) assigned to each indexed set. Figure 1 is a 16-byte, 2-way set-associative cache with 4B blocks.

Figure 1:A 16-byte, 2-way set-associative cache with 4B blocks.

Show Answer

B. Four sets of two blocks.

“2-way” means there are two blocks per set.

If the cache is 32B in capacity with 4B blocks, then there are eight blocks total.

If each set has two blocks, then there are four sets total.

Show Answer

In all cache types, the valid bit is used to check if the tag of a block is valid (and therefore that the block contains valid data for this program). Since there is one tag per block, all blocks need a valid bit. Setting a valid bit at the set level wouldn’t tell us if the individual blocks (ways) within the set are valid.

3Identification¶

Determining a cache hit in a set-associative cache works similarly to the process in direct-mapped caches, because the data can only be stored at one index. However, now the index has multiple slots (i.e., ways).

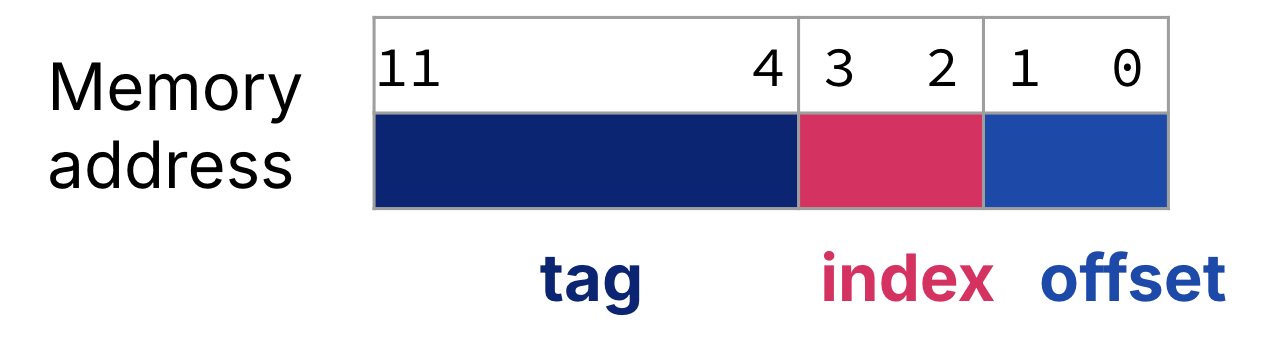

As an example, we can connect the 12-bit memory address in Figure 3 to the set-associative cache in Figure 4 (below).

Figure 3:For a set-associative cache, the memory address is split into three fields: the tag, the index, and the offset. For the cache in Figure 4, a 12-bit memory address is split into an 8-bit tag, a 2-bit index, and a 2-bit offset.

Notes:

In a set-associative cache, the index is used to select the set.

In a set-associative cache, the tag is the upper bits of the address, excluding the bits for the index and the offset. The tag is used to check the cache block.

As with all caches, the offset is the portion of the address needed to describe the byte offset within a block. These are always the lowest bits of the memory byte address.

The block address is the tag concatenated with the index.

While this memory address may seem identical to the address figure discussed in our direct-mapped cache section, we note that the cache in Figure 4 is twice as large as the direct-mapped cache figure.

4Replacement Policy¶

Show Answer

E. None of the above.

On a cache miss, set-associative caches must determine which block to replace within the set. All replacement policies now occur within each set of blocks.

Like with fully associative caches, most set-associative caches implement LRU, FIFO, or random replacement (within the set). “Most recently used” is possible, but since it contradicts temporal locality, such a policy is not useful in practice.

5Write Policy¶

Show Answer

C. both A and B.

All cache associativities can support either write-through or write-back policies. Again, placement policy does not impact our choice of when writes to memory happen.

6Walkthrough¶

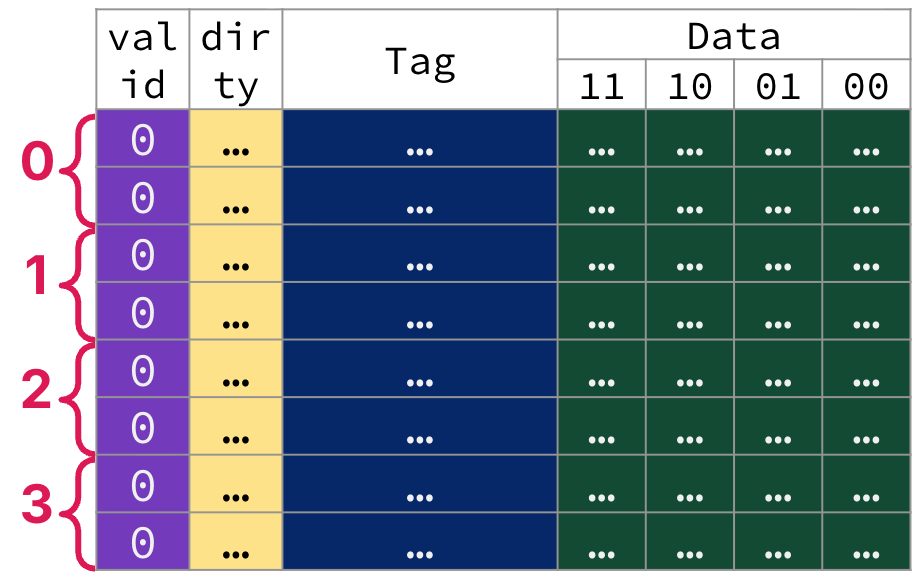

The following animation traces through four memory accesses to a 12-bit address space on our 32B, 2-way set-associative cache with 4B blocks. Assume a write-back policy. Assume the cache starts out cold, like in Figure 4.

Figure 4:A cold snapshot of a 32B, 2-way set-associative cache with 4B blocks and a dirty bit for write-back.

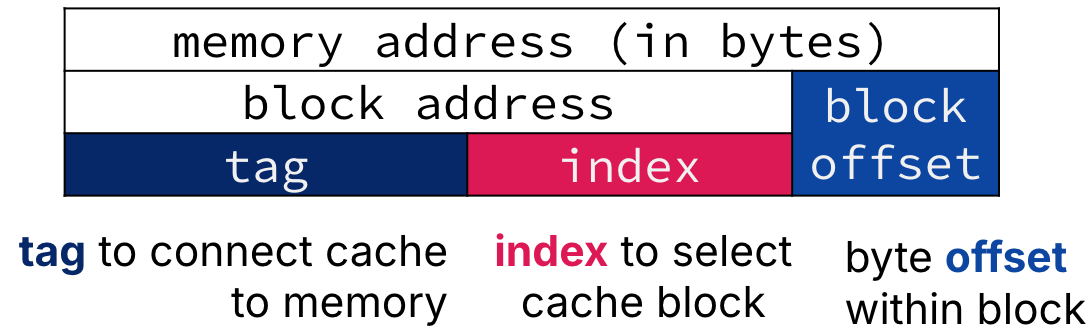

(b)A (byte-addressed) memory address can be decomposed into a block address and a block offset. For direct-mapped caches and set-associative caches, the block address can be further divided into a tag and an index. Fully associative caches have no index field.

(c)The spectrum of cache placement policies, with set-associative as the in-between approach.

1. Load byte @ 0xFE2. Cache miss.

0xFE2. Cache miss.Figure 5:Address 0xFE2 in binary: 0b1111 1110 0010

Tag:

0b11111110, or0xFEIndex:

0b00, or0Offset:

0b10

Cache Miss. No valid tags in the set with index

0match0xFE.Access lower level of memory hierarchy. Replace the least recently used block in the set with index

0(here, could be either since cache is cold). Load in a block’s worth of data from memory starting @ address0xFE0(0b1111 1110 0000). Write the tag0xFE. Mark valid bit. Unset dirty bit.Read. Read byte in cache block at offset

0b10and return to processor.

2. Store byte @ 0x61C. Cache miss.

0x61C. Cache miss.Address 0x61C in binary: 0b0110 0001 1100

Tag:

0b01100001, or0x61Index:

0b11, or3Offset:

0b00

Cache Miss. No valid tags in the set with index

3match0x61.Access lower level of memory hierarchy. Replace the least recently used block in the set with index

3(here, could be either since cache is cold). Load into cache entry3a block’s worth of data from memory starting @ address0x61C(0b0110 0001 1100). Write the tag0x61. Mark valid bit. Unset dirty bit.Write. Write byte in cache block at offset

0b00. Set dirty bit.

3. Load byte @ 0x61B. Cache miss.

0x61B. Cache miss.Address 0x61B in binary: 0b0110 0001 1011

Tag:

0b01100001, or0x61Index:

0b10, or2Offset:

0b11

Cache Miss. No valid tags in the set with index

2match0x61.Access lower level of memory hierarchy. Replace the least recently used block in the set with index

2(here, could be either since cache is cold). Load into cache entry2a block’s worth of data from memory starting @ address0x618(0b0110 0001 1000). Write the tag0x61. Mark valid bit. Unset dirty bit.Read. Read byte in cache block at offset

0b11and return to processor.

4. Load byte @ 0xCAD. Cache miss.

0xCAD. Cache miss.Address 0xCAD in binary: 0b1100 1010 1101

Tag:

0b11001010, or0xCAIndex:

0b11, or3Offset:

0b01

Cache Miss. No valid tags in the set with index

3match0x61..Access lower level of memory hierarchy. Replace the least recently used block in the set with index

3; here, it is the invalid block. Replace the invalid block with a block’s worth of data from memory starting @ address0xCAC(0b01100 1010 1100). Write the tag0xCA. Mark valid bit. Unset dirty bit.Read. Read byte in cache block at offset

0b01and return to processor.

Contrast this set-associative cache walkthrough with the one for direct-mapped caches:

Identification of a cache hit occurs by checking M tags in an M-way set associative cache: each of the tags for the M blocks in the set.

Memory accesses 2 and 3 create cache entries in cache entries

3and2, respectively; these cache entries share the same tag. However, the blocks in these entries have different block addresses.Memory access 4 did not incur a block replacement/memory write. Because there are two ways in a set, the existing block in the set with index

3was not replaced. At the end of memory access 4, the set with index3is full.

7Associativity: A Discussion¶

We illustrate in Figure 5b the relationship between block address, tag, index, and offset.

Notes:

Fully associative caches have no index field; the block address is the tag.

In direct-mapped caches and set-associative caches, the index is the lower bits of the block address used to select the set.

The tag is compared against every block’s tag in the set.

The offset is the address of the desired data within the block.

Show Answer

A fully associative cache can have blocks placed anywhere in the cache. Put another way, an M-block fully associative cache is an M-way set-associative cache, where M is the number of blocks in the cache.

Show Answer

A direct-mapped cache can have each block placed exactly in one location in the cache. Put another way, an M-block direct-mapped cache is a one-way set-associative cache, where M is the number of sets in the cache, and each set has one block.